A Few Stats About Us

Delivering Reliable Solutions for Your Business Since 2014.

Know more about the whole journey of Samarpan Infotech company. Our Core Values and Clients Centric Approach for Seamless IT Solutions.

8+

Years of Experience

90%

Retention Clients

470+

Projects

105+

Happy Clients

30+

IT Professionals

12,500+

Coffee Cup

What Our Clients Say

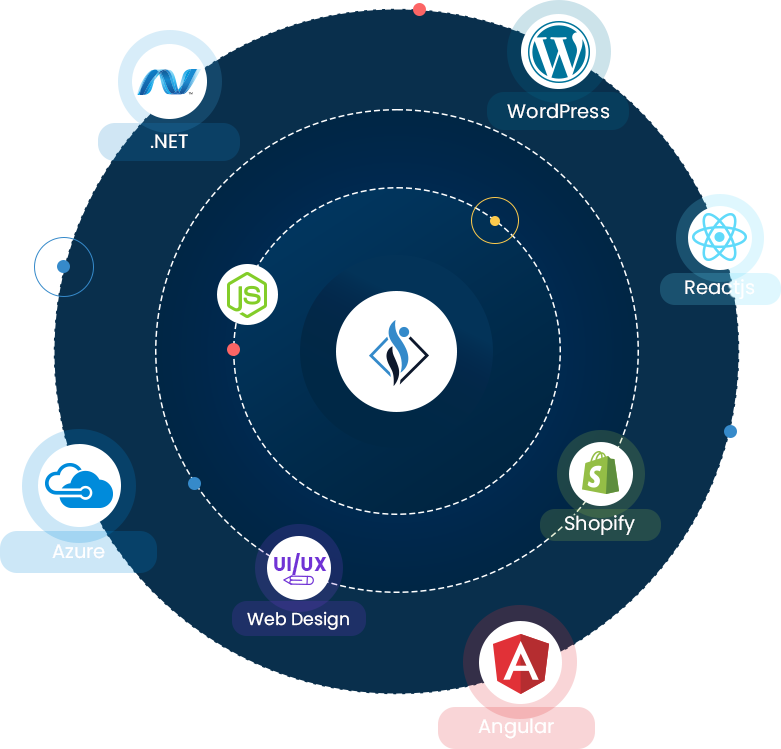

Our Services

Full Stack Software Development & Web UI/UX Services

.NET

Development

Our goal is to deliver the best reliable .NET development services to every business niche. If you want DotNET solutions, BI tools development, Chatbots development then we have the right team.

Azure Cloud

Consulting

Delivering cost-effective Cloud Automation, Integration, and PaaS Services. We are your Azure Consultants Partner.

Angular

Development

Delivering Robust Angular Development Services for your Unique Idea. Our Angular Developers are proficient to build an amazing web app as per your requirement.

UI/UX

Design

Escalating the growth of startups and enterprises with our ingenious and responsive website designs. We deliver Website Design, UI, Graphic Designs, Live Mockups, and much more.

WordPress

Development

Want to upgrade your business to stand out? WordPress Website Development is the right solution for you. Get a Website that is search engine ready to help you rank among the top search results.

SEO

Services

We provide SEO services across the world at affordable cost with a team of experienced SEO professionals. Get Better Search Visibility with NO FAKE Promises.

Latest Insights

Keep Your Knowledge Impeccable with Latest News, Tips, Updates

Squarespace has emerged as a popular website builder and e-commerce platform, offering a comprehensive solution for businesses looking to es...

Running a Shopify store? Think of it like caring for a garden: both need regular love and attention to flourish. Just like a gardener trims ...

In this article, we’ll explore how to create an author bio section and author page for a custom post type in WordPress without using a...